Log file analysis is a powerful tool that can provide businesses with insights into how search engines interact with their websites.

For brands eager to learn how to indexed by Google faster, analysing logs offers actionable data that speeds up optimisation and highlights technical gaps. This article explores the significance of log file analysis specifically for Dubai’s unique digital landscape.

With a focus on sectors like real estate and finance, this guide will outline practical steps for leveraging log file analysis to enhance visibility and return on investment (ROI). Let’s dive into the details of this essential SEO practice.

Overview of Log File Analysis and Its Importance for SEO in Dubai

Log file analysis refers to the process of examining server logs to understand how search engines crawl and index a website. By analysing these logs, businesses can uncover valuable data about their site’s performance and identify areas for improvement.

In Dubai’s competitive market, particularly in high-stakes sectors like real estate and finance, log file analysis becomes crucial. It allows businesses to optimise their online presence by ensuring that their sites are easily accessible to search engines and users alike.

As part of a broader optimisation strategy, following a technical SEO checklist in Dubai helps ensure that insights from log file analysis are translated into actionable improvements.

Understanding Dubai’s Digital Landscape

The digital landscape in Dubai has witnessed rapid growth, with many businesses establishing a robust online presence. Areas like the Dubai International Financial Centre (DIFC) and Dubai Marina are hubs for industries such as finance and real estate, respectively.

At the same time, innovation-driven districts such as Media City have become central to digital growth, making SEO in Dubai Media City a priority for agencies and businesses alike.

As competition intensifies, local SEO becomes increasingly important. Businesses must ensure they are visible in search results to attract potential clients, making log file analysis an essential tool for maintaining a competitive edge.

Key Benefits of Log File Analysis for Dubai Businesses

Enhance Crawl Efficiency

Log file analysis helps businesses identify wasted crawl budget, particularly for large property portals, fintech platforms, and e-commerce stores in Dubai. By recognising which pages are frequently crawled and which are ignored, companies can optimise internal linking, sitemap priorities, and canonical signals. For example, a real estate site in Dubai Marina may discover Googlebot is over-crawling outdated listings while ignoring high-value investment guides. Addressing this imbalance ensures critical pages are indexed faster and updated more regularly.

Improve User Experience

Insights derived from log files can lead to better site architecture and faster load times. By analysing response times, businesses can detect server bottlenecks or heavy scripts that impact both users and search bots. This is crucial for retaining visitors in Dubai’s highly competitive digital market, where bounce rates directly impact conversions. A smoother experience not only satisfies Google’s Core Web Vitals but also reassures customers browsing high-value services such as wealth management, consulting, or property investment.

Strengthen SEO and Rankings

Log data reveals how Googlebot interacts with your site versus how users experience it. By aligning crawl data with SEO strategy, businesses can ensure that high-margin landing pages such as “SEO in Dubai Media City” service pages or “sell property fast in Business Bay” offers get sufficient crawler attention. This alignment drives more consistent indexing, which translates into improved rankings, better visibility in Google AI Overviews, and ultimately, more qualified traffic.

Detect and Fix Hidden Errors

Many businesses in Dubai only spot technical errors when rankings or traffic drop. With log analysis, issues such as 404 clusters, redirect loops, blocked resources, or mobile-only crawl failures are surfaced early. This proactive monitoring allows companies to fix problems before they affect organic performance. This is vital in industries like finance or e-commerce, where downtime or indexation loss can mean significant revenue impact.

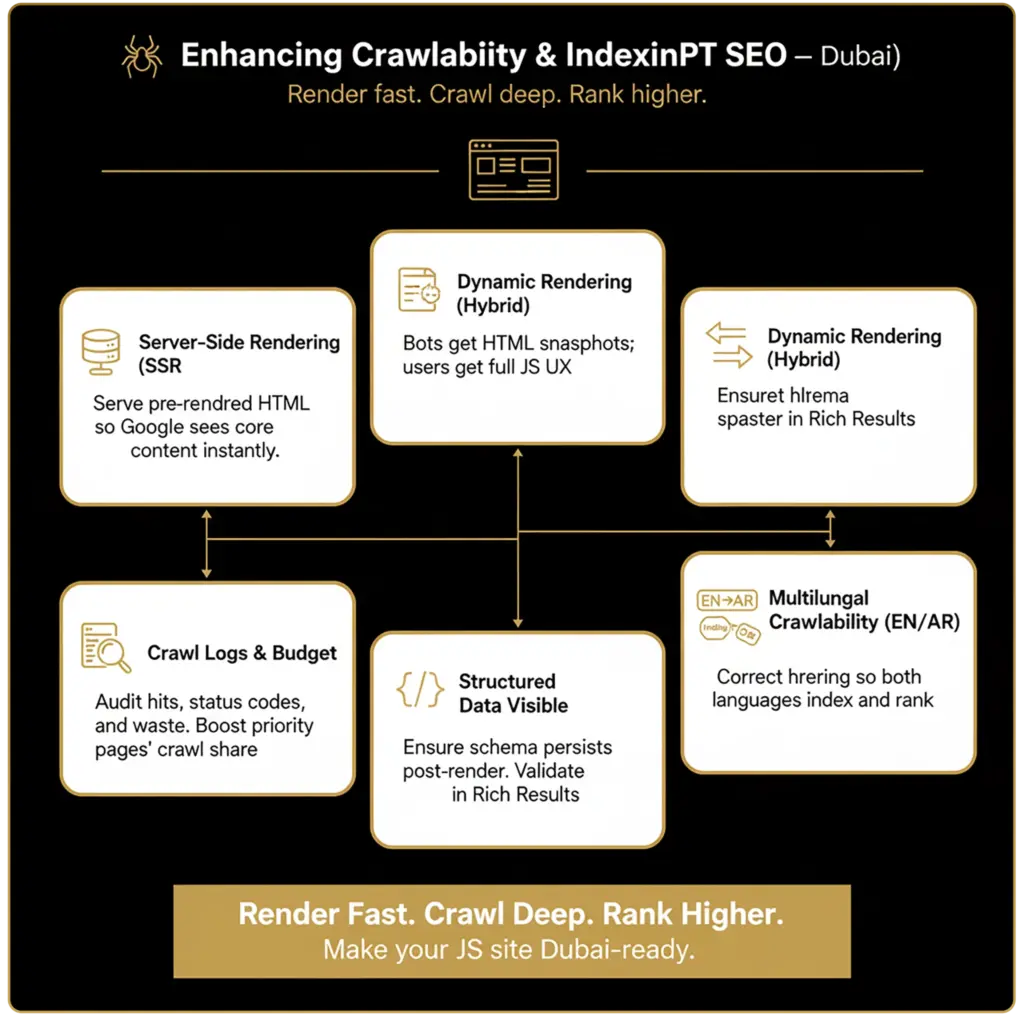

Support International and Multilingual SEO

Dubai’s digital market is unique in its bilingual nature, with most businesses operating in both English and Arabic. Log file analysis highlights whether Google is crawling both language versions effectively, and whether hreflang signals are being respected. For example, if ar-AE pages for real estate investments are not being crawled, businesses risk losing visibility with Arabic-speaking investors. Log monitoring helps maintain balance and ensures consistent coverage across all language and geo-specific pages.

Common SEO Challenges Faced by Dubai Companies

- Localised Issues: Many SMEs in Dubai struggle with misconfigured robots.txt files that block key pages from being crawled, significantly hampering their visibility.

- Inconsistent hreflang tags: This can affect international clients in Dubai’s diverse market, leading to confusion and poor user experience.

- Competition in High-Value Sectors: Standing out in saturated markets like luxury real estate and finance is a significant challenge for many businesses.

Practical Steps for Conducting Log File Analysis

Tools for Analysis

Use at least one SEO log analyser plus a general logging stack. Pick based on your stack, data volume, and whether you need always-on dashboards.

SEO-focused log analysers

- Screaming Frog Log File Analyser – fast desktop analysis of raw logs, sessions, and bot filtering.

- Sitebulb – crawler with integrated log analysis and crawl-log overlays.

- JetOctopus – cloud log processing at scale, strong orphan and crawl budget views.

- Oncrawl – enterprise-grade SEO data platform with log monitoring.

- Botify – enterprise crawling plus log ingestion with change impact tracking.

- Lumar, formerly Deepcrawl – site health platform with log integrations.

- Ryte – website quality with crawler and log insights.

Dubai note: if you use regional CDNs or UAE data centres, ensure origin logs include true client IP and user-agent headers so bot detection remains accurate behind the CDN.

Step-by-step process

- Define business goals and segments

- Decide what you want Google to crawl more often: money pages, Arabic vs English folders, marketplace listings, or news.

- Create segments upfront:

/en-ae/,/ar-ae/, product vs blog, geo pages like Dubai Marina vs DIFC.

- Enable rich server logging

- Ensure logs include at minimum: timestamp, host, method, request URI, status, bytes, referrer, user-agent, response time.

- Keep at least 30 to 90 days rolling storage to see trend shifts around releases and campaigns.

- Anonymise IPs if required by internal policy and UAE PDPL.

- Export and secure the data

- Automate daily exports from web servers or CDN to a bucket or logging platform.

- Normalise formats to CSV or JSON Lines so your chosen analyser can ingest reliably.

- Verify real Googlebot

- Filter bots using reverse DNS verification to avoid spoofed user-agents. Guide: Verify Googlebot.

- Keep separate views for Googlebot Smartphone, Googlebot Desktop, and other bots like Bingbot.

- Ingest into an analyser and join with your URL inventory

- Load logs into one of the tools above. Also import your XML sitemaps, CMS exports, or data warehouse URL list.

- This join is what reveals orphan pages and indexable pages never visited by Google.

- Baseline crawl health

- Metrics to chart weekly: unique URLs crawled, hits per template, crawl share by section, %2xx, %3xx, %4xx, %5xx, average response time, average bytes, depth, and last-seen date by URL.

- Dubai-specific checks: Arabic versions with

hreflang="ar-AE"receive crawl hits, Englishen-AEversions are not being preferred incorrectly, and city hub pages for locations like Business Bay or Dubai Marina receive regular hits.

- Find waste and blockers

- Waste: parameter loops, infinite calendars, faceted filters, duplicate pagination, preview or staging URLs, heavy querystrings created by tracking.

- Blockers: 404 clusters from moved products, 301 chains, 403 or 429 from WAF rate limits, 5xx under traffic spikes, JS-rendered pages timing out.

- Signals: pages with

noindex, conflicting canonicals, blocked assets inrobots.txtthat Google needs to render, large CSS or JS causing slow TTFB.

- Prioritise fixes that increase useful crawl share

- Collapse parameters with canonical rules or server-side rewrites.

- Remove 301 chains by linking to final URLs and fixing legacy redirects.

- Allow essential assets and block only true waste. Do not block

/wp-admin/admin-ajax.phpor equivalent if your theme depends on it. - Add pagination and faceted navigation rules with noindex, robots rules, or distinct crawl paths.

- Improve server response times and cache headers, especially for UAE visitors and Googlebot Smartphone.

- Strengthen discoverability for Google

- Keep XML sitemaps lean and fresh with lastmod.

- Ensure internal links point to canonical URLs in both Arabic and English trees.

- Check that

hreflangmaps eachen-AEpage to itsar-AEequivalent and vice versa. - Surface high-margin Dubai pages in top-level nav and hubs so they attract more crawler entry points.

- Validate impact with before-after deltas

- Expect to see an increased share of Googlebot hits on your priority segments, lower 404 and 5xx rates, and steadier recrawl of key money pages.

- Pair log trends with Google Search Console impressions and average position for those segments.

- Automate monitoring

- Set alerts when 5xx or 429 exceed thresholds, or when crawl share of a business-critical folder drops.

- Schedule weekly exports of “URLs not crawled in the last 14 days but indexable” for quick triag

Analyst’s quick map: signal to action

- High hits on parameters or filters → consolidate with canonicals or rules, add

noindex, provide clean links. - Many 301s then 200 → update internal links and sitemaps to final targets.

- Low crawl on /ar-ae/ → check

hreflang, internal linking depth, and sitemap coverage for Arabic. - Spikes in 5xx at night UAE time → review deployments, autoscaling, and CDN cache rules.

- Large static files hit by Googlebot often → ensure cache headers and consider preloading critical CSS to reduce repeated fetching.

Practical tips for Dubai sites

- Host or cache close to the UAE to reduce TTFB for both users and Google’s Smartphone crawler. Cloudflare UAE PoPs or UAE-based nodes help.

- Audit bilingual routing early. Keep consistent URL patterns like

/en-ae/and/ar-ae/to simplify segmentation and avoid accidental duplication. - During high-demand periods in the region, such as Ramadan or major sales, increase log sampling and bot rate allowances to avoid 429 throttling.

Case Study: Log File Analysis in Action

Consider a Dubai-based property portal that utilised log file analysis to optimise their site. By identifying which pages were underperforming in terms of crawl frequency, they restructured their content and improved site speed.

The outcomes were significant: an increase in visibility, enhanced crawl efficiency, and improved user engagement metrics, demonstrating the tangible benefits of log file analysis.

Unique Opportunities for Businesses in Dubai Through Log File Analysis

Capitalising on the Local Ecosystem

Dubai businesses can leverage insights from log files to tailor their marketing efforts to the local ecosystem. Understanding local search patterns can help companies target their audience more effectively.

Scalability for Growth

Effective technical SEO strategies derived from log file analysis can support the scalability of e-commerce businesses in the Dubai market. By addressing technical issues, companies can enhance their online presence and drive growth.

Conclusion

Log file analysis is an invaluable tool for SEO in Dubai, offering businesses the insights needed to enhance visibility and ROI. By adopting a data-driven approach, companies can navigate the competitive landscape more effectively.

As the digital market continues to evolve, utilising log file analysis will become increasingly critical for success. Businesses should consider integrating this practice into their SEO strategies to stay ahead of the competition.

Frequently Asked Questions

What is log file analysis and how does it relate to SEO?

Log file analysis involves examining server logs to understand how search engines crawl and index a website. This process is vital for SEO as it helps identify areas for improvement and ensures that a site is optimised for search engines.

How can Dubai businesses benefit from tracking Googlebot’s crawl patterns?

By tracking Googlebot’s crawl patterns, Dubai businesses can identify which pages are being crawled frequently and which are not. This information can help optimise content and improve site structure to enhance visibility.

What common mistakes should Dubai companies avoid when analysing log files?

Common mistakes include misconfigured robots.txt files blocking key pages and improper implementation of hreflang tags, which can lead to significant visibility issues in a diverse market like Dubai.

Which tools are recommended for effective log file analysis?

Tools such as Google Search Console and Screaming Frog are highly recommended for effective log file analysis. These tools provide valuable insights into site performance and crawl efficiency.

How can log file analysis improve my website’s performance and user experience in Dubai?

Log file analysis can identify technical issues that may be hindering site performance, such as slow load times or crawl errors. By addressing these issues, businesses can enhance user experience and improve overall site performance. Use our free SEO audit tool to quickly identify SEO issues and unlock opportunities to dominate your niche online.